Don’t Throw Away Your Phishing Program …Yet

I’m hearing more and more discussions on how the act of phishing employees actually creates more harm than good. The arguments in favor of ditching your phishing program are compelling.

It can tick off employees and consequently create a rift between them and the security team for a variety of reasons through:

- Enticement/misleading promises

We’ve heard of companies using phish templates that promise enticing things like bonuses, free products, etc. The employee gets excited (feels good) just to learn it was a company exercise (feels tricked). Resentment toward the security team ensues. - False Positive Results

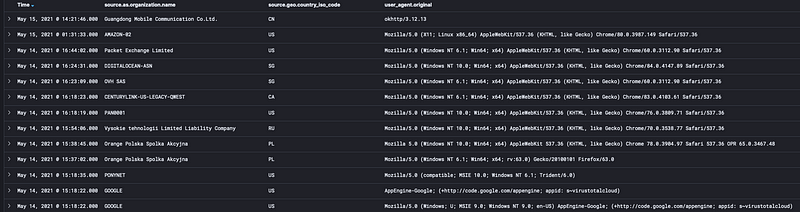

These are common and are usually due to other security tools “checking” the links. See Nathan Hunstad’s post on the latest issue we noticed with our phishing program. A false positive shows up as a click on that employee’s account even if they never clicked, in fact, they likely even saw and reported the email in good, secure fashion. Any additional training that pursues from this “click” is bound to cause resentment and is unfair. - Training overload

As if our employees don’t already have enough required security trainings, some companies send additional training to “clickers”. This results in training overload, burnout and resentment. Not to mention it’s ineffective. - Smack Down

A new employee joins the company, is excited about the new opportunity, has good will all over the place and then gets “tricked” by the security team with a phish. All that was accomplished was either embarrassment, disappointment or even resentment toward the security team and potentially against the new employer. Eek.

These are real concerns to which security teams need to pay close attention. But the answer is not to throw the phishing program out the window because a GREAT phishing program can avoid these pitfalls and at the same time strengthen defenses against phishing threats, a long-standing vector for attackers, especially in successful breaches as noted year over year in the Verizon Data Breach Investigations Report (VDBIR).

Email filters can be effective and greatly reduce the number of malicious attempts that actually make it to your employees. But they don’t catch 100% of the risks and even if 1–3% of the attempts make it through, as you are well aware, it only takes one click for the attacker to get the upper hand. The occurrence risk is low but the impact risk ranges from high to incredibly high, depending on the damage that could ensue. And until that magical day when our filters are guaranteed to be 100% effective in catching all malicious emails that come our way, you can quite easily engage your employees to be your human firewall without damaging relationships and the reputation of your security team.

To do so you’re going to need a plan and the right folks to carry it out. Luckily it’s easier than you think but you’ll need to make the INTENT of the program clear to all and then follow up with a risk-based approach. If damage has already been done at your organization, it might make sense to take a few months off to give your employees some time to cool off and for you to rewrite your program. It’s doable. At Code42 we’ve brought our click rates from upwards of 25% to less than 3%, on average and our employees actually enjoy the challenge. We didn’t get there overnight, as you’d expect with any good outcome, it took time and patience. Here’s how we did it.

You Need Their Help

It is so critical that employees know why you phish them, what it means for you and for them and how they can be a part of reducing risk for the company. They have a vested interest in the success of the company — that’s where their paycheck comes from and where they spend a ton of their time. They also likely hold pride in their work. So most everyone will understand it when you tell them that the security team alone cannot protect the company. We need them to help keep the company secure. We won’t be nearly as successful without them. Instilling this call for help appeals to most people. If that alone doesn’t do it for everyone, read on.

Intent

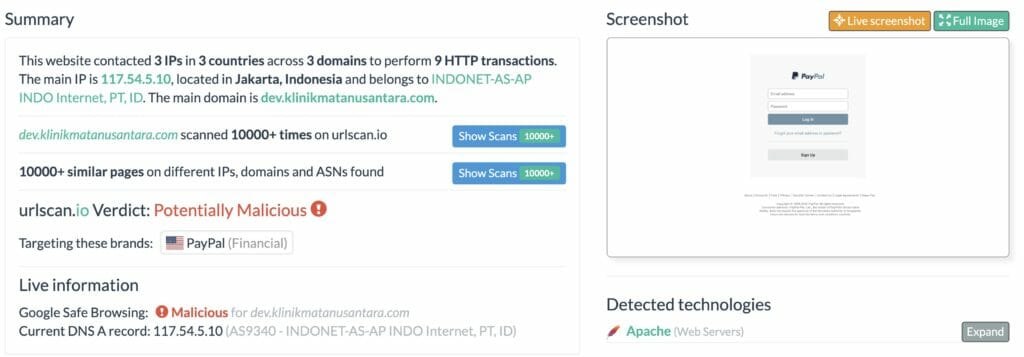

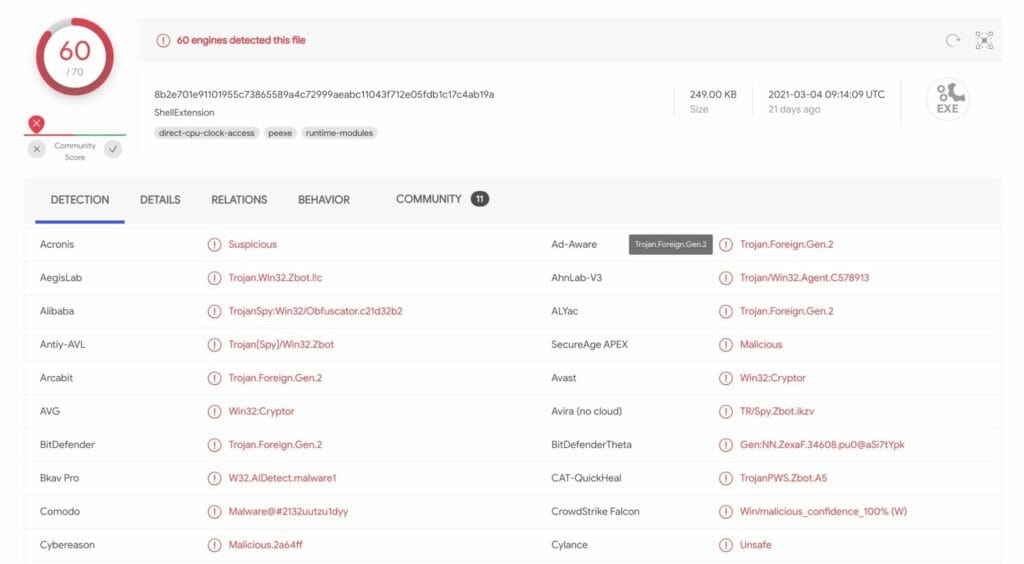

Your employees must know your intention for the program. It should never, ever, ever be about trying to “catch” anyone. In fact if you are choosing templates because you are sure it will cause people to fall for it, you better be darn sure that it is a template that you see coming from the wild and making it past your filters. If not, what risk are you addressing? Work with the team who sees what types of phish emails are making it past your filters. That is your real risk — that’s what you should mimic. Anything else is a futile exercise that wastes time, achieves little and frustrates many.

If you are seeing phish attempts in the wild that may hit close to the heart of your employees, such as free virus testing or vaccines, consider communicating about those to all users and include a screenshot rather than sending the phish, as the way to inform them. This is a more ethical approach and will help avoid the emotional roller coaster of good things promised followed by, what they’ll perceive as a slap in the face. Believe me, I learned the hard way on this one.

The Goal

Make it clear that it is not your desire to “catch” anyone. In fact, the goal is to catch absolutely no one (achieve the elusive 0% click rate). To that end you are going to give them opportunities to practice, because we aren’t good at anything in life unless we practice. Natural abilities only get us so far.

At Code42 I have the luxury of following up with everyone that clicks after each exercise to learn more about what happened because our click rate is so low. I tell them at onboarding to expect me to reach out but it is only for two reasons 1) to check if it was a real click or if technology interfered (see Nathan’s post mentioned above.) And if they say they didn’t click, I will absolutely believe them. I’ve had colleagues laugh at what appears to be gullible innocence. The way I see it; what’s the point of being skeptical or cynical when it comes to an important relationship? And what relationships succeed without trust? 2) if they did in fact click, I ask what happened (they usually offer it up first) so I can learn where our employees are failing and use that real world information for further education for them but importantly for others (with no names mentioned) about pitfalls we are actually seeing.

What to do about the clickers

We’re human, that’s why phishing works for attackers. So a single click in an exercise should not be seen as a risk to the company, quite the contrary. Guess who is least likely to engage with the next phish, perhaps a real one. This group.

Frequent or repeat clickers are more the risk you want to work on. Know who these folks are and make sure you have ruled out false positives. As a result of the work done by Nathan in his above mentioned blog, we reached out to our phishing vendor and learned that we could easily identify those false positives in our dashboard and remove those clicks from employees’ records. If you are a large company you may have to automate this or take a little time with some v-lookups. It is time well spent. No one wants to have a strike against them that they didn’t cause.

After you’ve removed false positives, the number of repeat clickers should be low. You need to connect with them to find out what’s going on. They need more direction, meaning a one on one discussion with someone on your team. I’ve haven’t run into anyone who repeatedly clicks but that doesn’t mean they don’t exist. So you should build a process into your program that defines a threshold after which to engage the employees manager, then department leader, then HR. If someone continuously clicks after being talked with and further educated, it may indicate they truly don’t care about the company and you likely have a bigger problem on your hands than a trigger finger.

There is no need to provide names to leadership of one-time or infrequent clickers. Leaders, please don’t ask for these. This can only result in a futile exercise in shaming and will be ineffective. In fact, tell your employees at the start of your program or at onboarding that if they slip up they will not be reported by name. Department heads may get result metrics for their area but with no names attached. Now if the employee slips up over and over again, that’s a different story.

Training

Train your employees on how to recognize a suspicious email BEFORE you start your phishing program. It’s not fair to test them without training them and it will feel like trickery.

Automatically assigning training to folks who click is also likely to tick them off. Besides, if they didn’t learn from your prior training, this isn’t likely effective. They need one on one attention. If you don’t have the staff to do this, consider slowing down your phishing program to give the analyst(s) who run it, the time to connect with folks. Just make sure their intent is to assist the person, not slap them on the wrist. See Intent section above.

Run a Risk Based Program

We already discussed choosing risk-based templates and how to help out true, risk-prone users. Next, adjust frequency to meet your goals. If your numbers are in the acceptable range for your company (VDBIR states that average these days is ~3%) then maybe it will be sufficient to send a phish quarterly. Free up those analysts for other work if your numbers are low. Continuing to phish for the same results is not a good use of anyone’s time. Alternatively, you can take a look to see if there are some groups not meeting that threshold. If that is the case, focus on those groups. Use templates specific to them, and which you are seeing in the wild.

Communicate, Communicate, Communicate

This is not just your check the box that you sent an email, put an article on the company intranet or messaging app. The goal is to influence. If that is not your forte, study up. There are books on sales tactics and on creating meaningful, memorable messages, like Made to Stick by Chip and Dan Heath. Search YouTube. Just know that if you want to be effective, you need to persuade. A good company-wide communication would be around how the company is improving. Discuss success rates, rather than click rates. It may seem subtle but it is all about rewarding good behavior.

Get time during onboarding. If the team who does your onboarding says they can’t possibly fit you in, negotiate even if you only get five minutes. If you can’t get that, help them truly understand the impact a few minutes of onboarding time will have on reducing risk to the company. During onboarding talk about the phishing program and your intentions around it. Be positive and sincere and let them know that the skills they will learn will benefit them and their families at home too. You can even sell it as a gamified way of learning. Don’t underestimate your energy, selling this “opportunity” is worth its weight in gold.

It’s always good to show that leadership supports the phishing program as well. If you don’t have their support, work to get it. If you have it, a message from the CEO or CISO or other C-Suite executive can help build good will around the program. Just make sure it conveys only positive intent and is not a finger shaking message.

So there you have it. You have a choice. Throw out your phishing program and accept the risks that open up to attackers or create a GREAT phishing program that is effective and well received by your employees. There really isn’t a middle ground here. But the latter is doable, more nuanced and will take some time but we’ve achieved it at Code42 and so can you.

The security team at Code42 is passionate about improving security everywhere. You’ll find more great security blogs by our thought leaders at redblue42.com. Or feel free to reach out to me on LinkedIn.